(this post originally appeared on designingsound.org)

We all work in creative fields doing creative work with creative people. Along with that creativity is some semblance, great or small, of technology and the requirement for us to guesstimate the length each task we do will take. Making an entertainment product, no matter the medium, has elements of reinventing the wheel and huge swaths of unknown creative territory. The opportunities for innovation are often near impossible to accurately schedule down to the day or hour. Couple that with the fact that most professional creative endeavors entail dozens or hundreds of other people doing work that will impact our own. For these reasons, we need to accept that occasional crunch may be inevitable periodically during a project’s lifespan, BUT crunch does not have to be an unending slog of 18 hour days, stale pizza, and sleeping under your desk.

As a manager it is my job to protect my employees as best I can from anything in the workplace that may negatively affect their quality of life. It is also my (unspoken) job to protect my own sanity. Below I will sketch out the means I have used to protect my employees and myself and have learned to mitigate crunch to just a few days and weeks scattered throughout a project.

This is not just for managers; it’s for everyone. If you’re a one-person audio show, you ostensibly are your own manager. Even within an audio team, everyone needs to manage aspects of their own work no matter what. How and when we do our work can often save us some pain in the end.

Front load

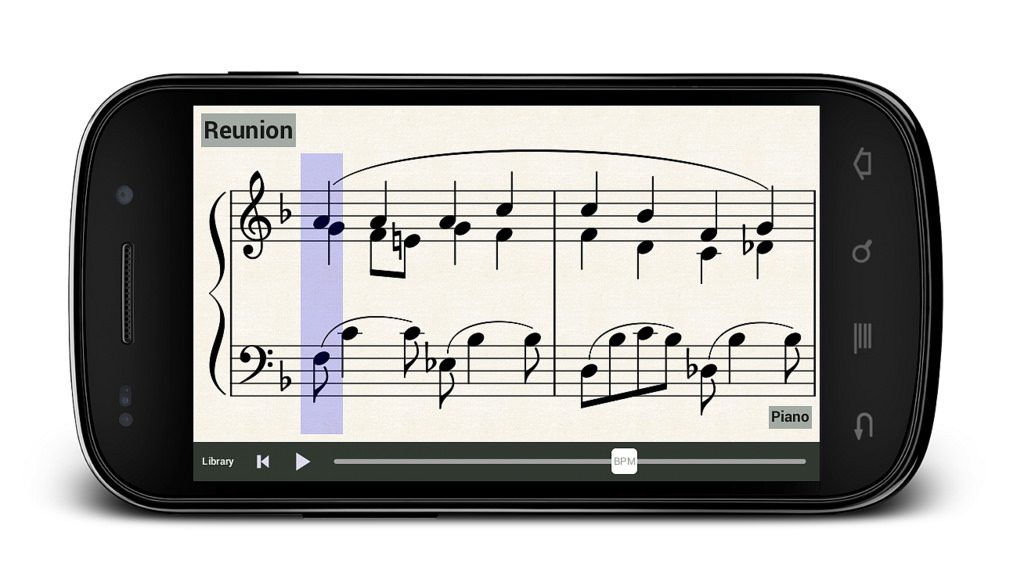

One of the most important means to get a project into a good spot toward the end of its lifecycle is to front load asset creation wherever possible. This often means identifying elements of the project with a lower probability of changing even as the scope or design of the project itself evolves. Ambience, character foley, and physics impacts are often places we can do the most early work without losing time and assets to changing direction. If your project will have tons of breakable crates, you can probably schedule a wood breaking session before the crates even exist in your project and cover a comprehensive behavior set of breaking, sliding and impacting wood. If your project takes place in a modern day city, you can start identifying the ambient sounds you’ll need and begin capturing and integrating them immediately. If you’re in a medieval fantasy world, you can do the same and use your imagination a bit more to help fill in the voids. Concept art is always helpful to provide both inspiration and maintain a direction that will match the visual aesthetic. Same goes for characters. If you have sketches of the characters and their clothing, ideally with material callouts, you can start amassing the materials you’ll need for clothing and footsteps. The odds of finishing these broad categories of sound design early is unlikely, but having the bulk done before production shifts into high gear will ideally give you time later for more critical sound design tasks.

As with any of these strategies, it is imperative to be in communication with the rest of the team when making choices here, so we can be making smart decisions on what we work on. If the design of a character is still in flux, obviously we would not want to invest our time in design work which may be thrown out in a month or a year. It is critical to work smartly whether in pre-production or marching toward an end date if we want to prevent as much crunch as possible.

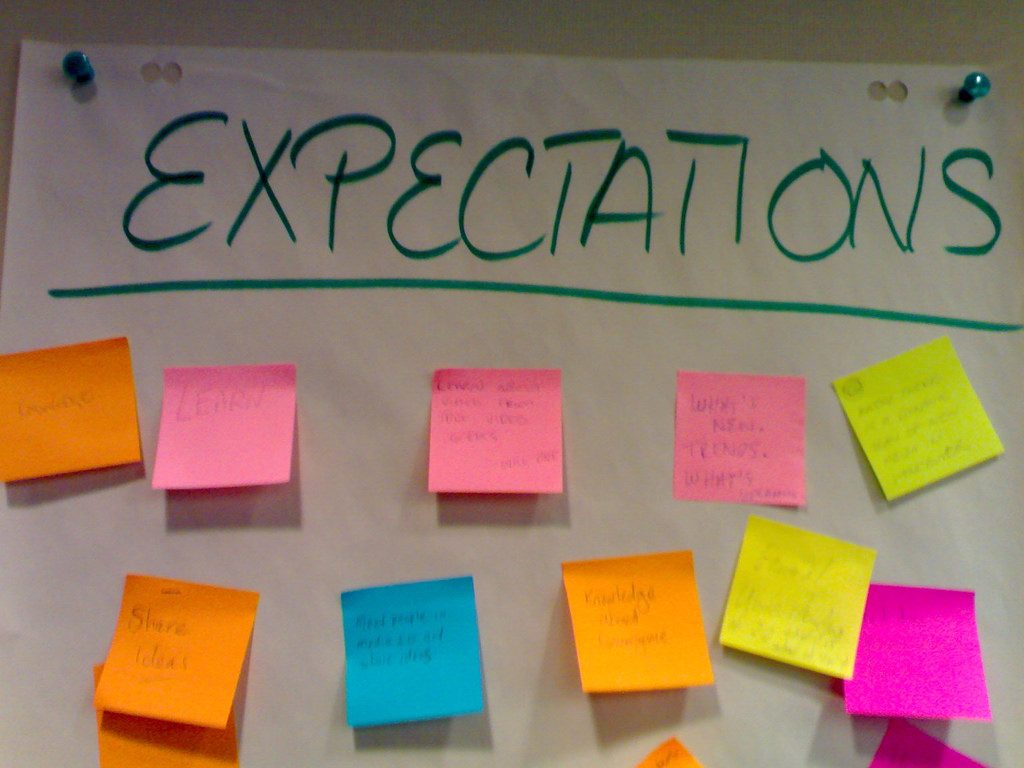

Set expectations

When it comes down to it, there is no way we can successfully relieve ourselves of most of our crunch without setting expectations within the rest of our production team. For most other disciplines, what audio does is a black box of unseen (but heard) magic. Therefore, we need to be exceptionally proactive in communicating with the greater team. Getting information from them about features and schedules as early and often as possible is essential. Check in frequently in whatever way(s) you can to ensure there are as few surprises as possible throughout production.

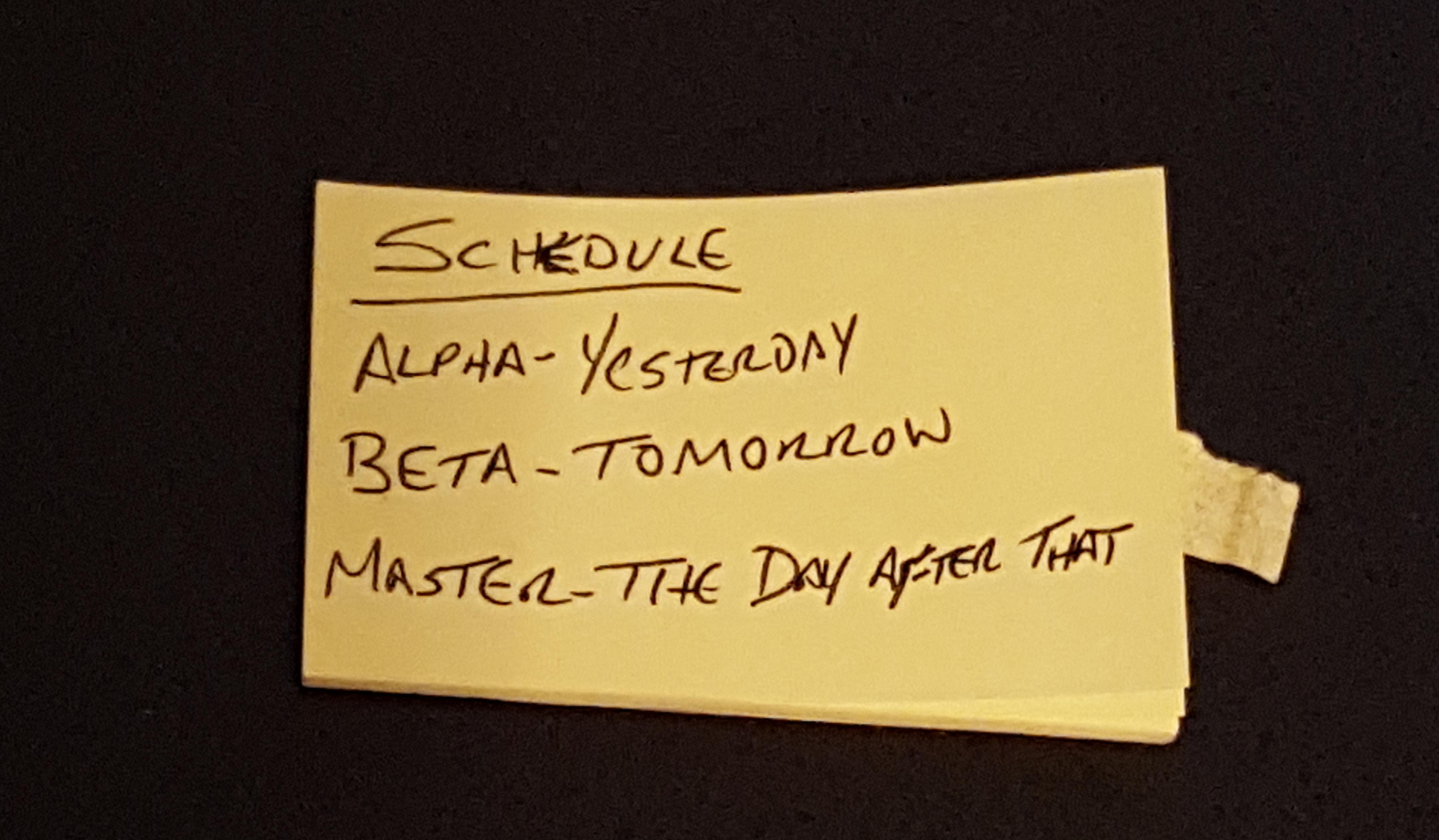

Inevitably, there will be hiccups: miscommunications, new features, forgotten meeting invitations, etc. It is critical that we are clear in communicating audio schedules and make it understood that when other peoples’ schedules change, we have no choice but to follow suit. Once a team understands the cascading effect of changes it can lead to a better sense of planning and a more conservative approach to last minute, risky additions. In a perfect world, it will lead to extended deadlines for audio and other tail-end team members, but this is unfortunately still the exception to the rule. There are some studios that provide a grace period for audio work compared to other disciplines. This should not be the exception, but to make it a standard rule, we must generate trust from others in order to gain this additional flexibility and time to keep working after others are locked out.

This trust and understanding is something that must be continually fostered. Part of our jobs beyond sound design, mixing, and integration is education. We need to be teaching the team not just about what we do, but how their decisions affect those of us downstream and the kind of lead-up time we need to effectively respond to changes.

It is also critical as managers and sound professionals that we stand our ground. It’s a hard pill to swallow that it can be okay—if not imperative— to say no to team member requests, and there is a fine line to tread. Obviously we want to make the best sounding product we can. We also don’t want to make a habit out of saying no to external requests, as this can cause serious professional problems. Instead, we need to always have the macro view of the project in mind and use this to smartly ensure others’ decisions that will affect audio are thought out and the ramifications to your team and the project are understood and considered.

Part of what we are doing through production is trying to balance quality versus quality of life. We all want to do the best job possible and make something we are proud of at the end of the day. But killing yourself, having your health affected, or being broken by crunch does not make it worthwhile.

A successful strategy I have employed in my projects is to organize delivered sounds into a few categories: temp/placeholder, which are usually just a means to get something stubbed in but blatantly not final quality, shippable, which is good enough to ship but not good enough to make you happy when you hear it, and final, which is a polished, perfect sound. Get all your sounds to a shippable quality first, and then bring your most important sounds to final level. If you have additional time, bring a batch of the next-most-important sounds up to a final quality bar, and repeat until it’s pencils down time. It can be exceptionally difficult to be comfortable signing off on sounds that are just “good enough.” But where health is concerned there is a balance that must be struck. “Work smarter, not harder” is a key mantra to quality of life in the workplace. And part of working smarter is identifying which sounds are not critical enough to take time out of your schedule for further iteration and polish, and knowing when something is good enough to sign off on and move along.

Protect your People

As a manager, it is critical not just to save your sanity, but to try and protect your employees as well. Crunch is never easy, and these considerations should really be made for the entire team, not just your direct reports. It’s likely that everyone is going through their own personal hell during crunch, but you’re all going through it together. Checking up on each other is a great way to bond, grow stronger and more efficient as a team, and even stumble upon happy accidents and features.

When going through a crunch, it is also important to recognize the stress long hours can put on peoples’ lives. We all have a life outside of work: family, friends, children, hobbies, sports, etc. We need to be supportive of and foster our employees’ abilities to have their life outside of work even during crunch. It helps to be more flexible with your employees during crunch when you can. If they work late, let them come in late. Ensure they don’t work 7 days a week, cover for each other to give yourselves breaks during a tough schedule, take some time every day away from your monitor, and ensure your employees do the same. Giving some leeway will help their morale, their sanity and their productivity.

Our external activities are what define us as people and provide us our solace and happiness. Work provides a salary and, for most of us we are also lucky enough in that it provides a creative outlet and often a way to be productive, make cool stuff, and have fun at the same time.

Time spent in crunch also needs to be considered once the long hours are done. Leniency here is also highly important to make up for the lost time in an employee’s life. Many organizations provide comp time after these periods, in effect giving employees free time off to recuperate, travel, be with their families, etc. If the place where I’m working does not provide comp time, or sufficient comp time, I will allow my employees to take a few extra days off when it makes sense anyway. It’s a small thing, but having this time to recover from long hours is critical to quality of life. When we ask more of our employees, we need to be prepared to give more as well.

Make it Fun

Crunch in some form may be inevitable, but that doesn’t mean it has to be a slog. If we have to go through a period of crunch, whether it’s a day or two months, try to keep the feeling light and fun among your team. It is on us to also help mitigate the pressures and pain of crunch by promoting more fun and stress relieving activities at work. I’ve worked at one studio that played BINGO during dinner on crunch nights. Initially I thought it was bizarre. Isn’t bingo something old people play out of boredom? And if I was going to be at work at night, I’d rather just get my work done and go home. But it was a super fun, silly diversion for us that, oddly enough, people looked forward to. If we were going to be spending long days and nights at the office, at least let’s take a half hour to goof off and laugh.

For my own part, I’ll invite the team over to my area after dinner for a drink. Anyone can bring a glass and we all enjoy a little sip of brown liquor before getting back to work. It takes twenty minutes out of our workday but provides a brief respite, a moment to relax inside the long hours and refocus for the remainder of the day. It bears noting that I didn’t come up with this idea myself; rather it developed organically at a previous studio where we were going through a particularly brutal crunch. We all took turns bringing in a bottle and amassed a frighteningly large collection of empties by the end of the project. It was a coping mechanism that developed into bonds of friendship that persist eight years later and counting.

Get Help

Obviously a great way to mitigate crunch is to get help. If you have the resources, hire some contractors to help your team out. Offloading work will allow you to focus on what’s important and get more of your shippable assets to a final level of polish. Of course, we often do not have the resources or even time to throw more bodies at the problem, but this is something that can be planned for earlier in production so the surprises that may creep up can be handled better towards the end.

As a manager it is part of your job to try and do right by your employees, but just as they are going through crunch and seeking solace and guidance from you, sometimes you need that same sounding board yourself. Your team is a great place for that, but if there are grievances of higher-level issues to discuss, you should seek out management or other leads to discuss these issues. Often seeking help from others will bring to light production issues they may not be aware of or help you come up with new solutions to improve your process moving forward.

This article would probably be as appropriate if it were titled, “How to be a Decent Manager,” as most of these issues are pretty obvious at the surface but often get lost or forgotten in the mire of production. Crunch should never be a defining factor of creative development. We are lucky we get to spend our working lives in a creative endeavor we are passionate about. But we also need to maintain time for ourselves outside of work. We need to constantly be fighting against the issues which can lead to crunch via communication, education, and getting pertinent work done early. At the same time, we need to accept that as deadlines creep up, there will inevitably be at least a few late nights interspersed into the schedule. To help the team deal with these periods, we need to be available to support them, and sometimes we need to be creative in either seeking out solutions for lessening the severity of crunch or at least turning it into something a little more fun.